Blog

8 Questions State and Regional Agencies Should Ask When Evaluating a Waste and Recycling Outreach Platform

by Routeware Team • April 6, 2026

State and regional agencies are being asked to do more than publish recycling guidance.

Today, they are expected to support local programs, improve consistency across jurisdictions, increase participation, reduce contamination, and demonstrate measurable progress toward broader waste reduction goals. At the same time, many are still working through static web pages, downloadable PDFs, disconnected local processes, and manual reporting that makes it nearly impossible to see what is happening on the ground.

That creates a real tension. How do you make waste and recycling information more consistent, more accessible, and more measurable across many districts or communities — without forcing every local program into the same mold?

That is where the right outreach platform matters. But not all platforms are built for the complexity of a multi-district or statewide program. The questions below are designed to help state and regional agencies move past the marketing language and evaluate what a platform can actually do.

1. Can the platform support a top-down structure without eliminating local control?

For most state and regional agencies, the model is not a single city using a standalone tool. It is a broader structure where one agency oversees the contract and the data, while local districts or communities still need the ability to manage their own content, rules, and messaging.

That distinction matters enormously in practice. The state needs visibility across all participating districts — ideally through a single dashboard that shows engagement, search trends, and program activity in aggregate. But the local solid waste district in a rural county needs to be able to update its own drop-off locations, manage its own accepted materials list, and push its own communications without waiting on the state or the vendor.

The right platform handles both levels simultaneously. Central oversight and local flexibility are not competing requirements — they should be designed features. If a platform cannot give a state agency a unified view while also giving individual districts genuine autonomy to manage local content, it is not built for this kind of program.

A useful test during evaluation: ask the vendor to show you what the state-level dashboard looks like when 20, 30, or 50 districts are active. Then ask them to show you how a district admin makes a change without involving the state or the vendor. Both should be straightforward.

2. How easily can local disposal rules be customized — and who does the work?

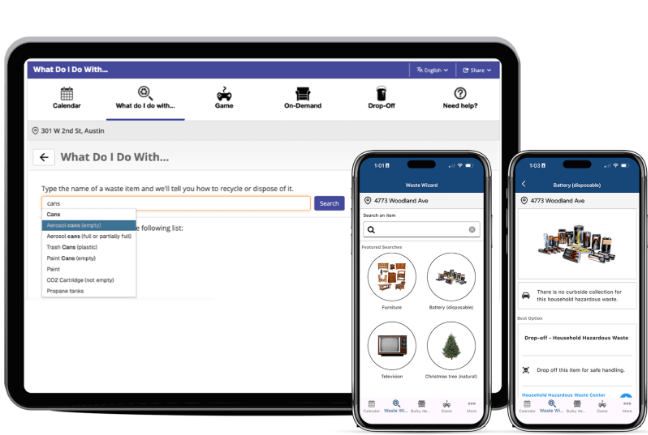

One of the most common and consequential challenges in multi-jurisdiction programs is deceptively simple: what is accepted in one area is not always accepted in another.

This shows up most acutely in counties or districts served by multiple haulers, each with different material recovery facilities accepting different items. A resident on the north side of a city may have curbside access to plastic clamshells. A resident 10 miles away may not. Generic recycling guidance fails both of them — it either gives the wrong answer to one or an incomplete answer to both.

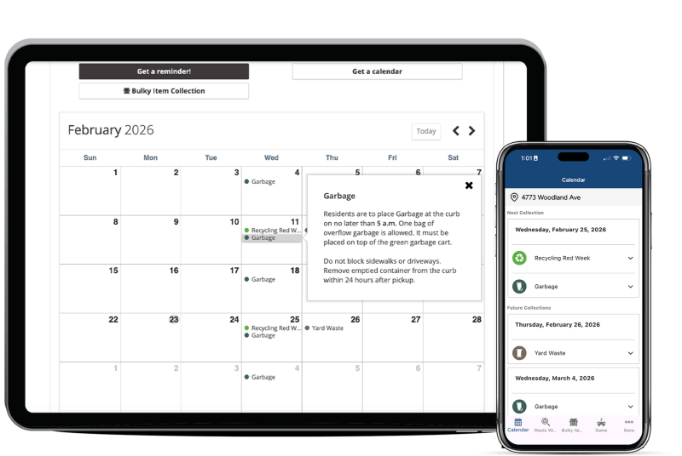

A strong platform addresses this with address-based configuration. When a resident enters their address or enables location access on a mobile app, the information they receive — which materials are accepted, where the nearest drop-off sites are, what the collection schedule looks like — reflects the rules that actually apply to their household, not a county-wide average.

That level of specificity only works if the underlying data can be maintained accurately. Which brings up the second part of this question: who actually does the updating?

If every local change requires submitting a support ticket to the vendor, the system becomes a bottleneck. Haulers change what they accept. Drop-off sites move. Special collection events get added or cancelled. In a program covering dozens of districts, those changes happen constantly. The platform needs to be manageable by district program staff — people who may not be technically sophisticated but who have the local knowledge to keep information accurate. If the back-end interface requires IT involvement for routine content updates, that is a practical problem for long-term program sustainability.

3. Are we replacing static information with something residents will actually use?

Most agencies already have recycling information published somewhere. The question is whether residents can find it, trust it, and act on it.

The honest answer, for most county and municipal websites, is not really. Recycling guidance tends to live several clicks deep, in a downloadable PDF, covering a handful of material types, last updated at some point in the past. A resident trying to figure out what to do with a rechargeable battery, a piece of latex-painted trim, or a broken piece of furniture is unlikely to find a useful answer quickly. If they do not find it quickly, they will either call the office or make their best guess — and their best guess frequently ends up in the wrong stream.

The problem is not that the information does not exist. It is that it is not accessible in the format and on the channels residents actually use.

A platform that addresses this well offers searchable disposal guidance covering hundreds of material types, not just the most common dozen. It works on mobile. It returns results based on the resident’s specific address. It stays current because local staff can update it without vendor involvement. And it meets residents where they are — whether that is a web browser, a mobile app, or a push notification they receive before their collection day.

The standard to aim for is not “better than a PDF.” It is: does a resident who has never heard of this program get a useful answer in under 30 seconds?

4. What data will we actually get back — and can we act on it?

This is one of the most important questions in the evaluation process, and one of the most frequently undervalued.

A lot of platforms can publish information. Fewer help agencies understand what residents are actually doing with it.

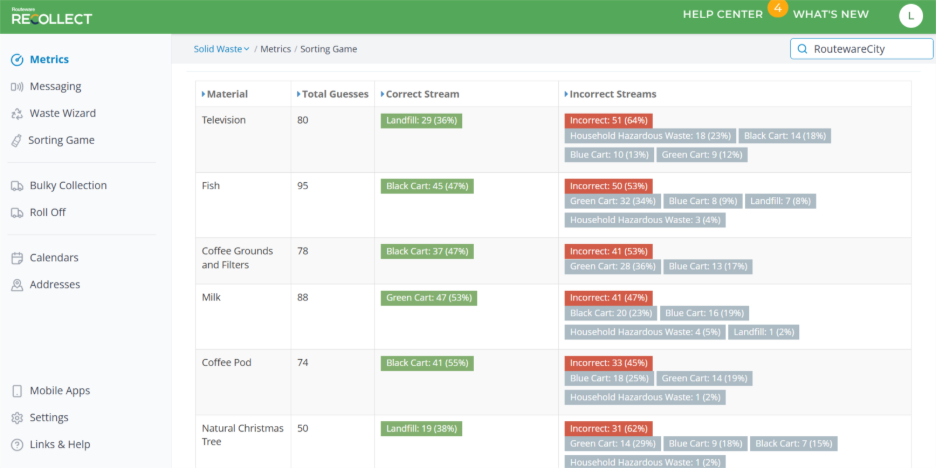

For a state or regional agency, meaningful data looks like this: which materials are residents searching for most often, and does that vary by district? Which areas have strong platform engagement and which have weak engagement? Are residents engaging primarily on mobile or web, and does that differ by geography or demographics? Which topics generate repeated searches, suggesting ongoing confusion rather than resolved questions? How do engagement patterns change over time, and what happens when a district runs a targeted education campaign?

That kind of information is valuable because it turns outreach from a broadcast activity into a feedback loop. Instead of pushing information and assuming it landed, agencies can see what is resonating, identify where confusion is concentrated, and use that to sharpen programming decisions.

At the state level, this is especially powerful. Aggregate data across all participating districts creates a picture that no individual district could produce on its own — a statewide view of what residents are asking, what is confusing them, and where education investment is likely to have the most impact.

Ask any vendor you evaluate to walk you through the analytics dashboard in detail. Ask specifically what a state-level administrator sees versus what a district-level administrator sees. And ask whether the data can be exported for use in your own reporting and grant documentation.

5. Does the platform support education, not just information delivery?

Publishing information and changing behavior are different things. That distinction matters especially in recycling and waste management, where contamination rates are often driven not by indifference but by genuine uncertainty.

Residents who put the wrong items in the recycling bin are frequently not careless — they are making their best guess in the absence of clear, accessible guidance. The industry term “wish-cycling” captures it well: if it seems like it might be recyclable, it goes in the bin. The result is contaminated loads that cost haulers and municipalities real money.

A platform that supports actual behavior change needs to do more than answer a single question on demand. It needs to provide guidance that is easy to find before a decision is made — not just when a resident thinks to ask. That means proactive features like timely reminders, event-based notifications, and seasonally relevant featured content that puts the right information in front of residents at the right moment.

It also means thinking about how to reach different audiences. Interactive educational tools, including gamified recycling experiences, have shown real uptake particularly with younger residents and school-age children. Several communities have used these tools as part of school-based programs, with the added effect of sending children home with better recycling habits that influence household behavior more broadly. That is a different kind of reach than a website update achieves.

For state and regional agencies trying to move aggregate contamination numbers, this breadth of engagement approach — information on demand plus proactive education plus interactive tools — is more likely to produce measurable outcomes than any single channel alone.

6. Does it align with the way people actually access information today?

A resident-facing platform must meet people where they are, and where they are is increasingly on their phones.

This is not just a preference for younger demographics. Smartphone adoption is broad enough that a platform without a strong mobile experience is leaving a significant portion of its potential audience underserved. That means a mobile app with full feature parity to the web experience — not a stripped-down version — and the ability to send push notifications that residents actually receive and act on.

White-labeling is worth raising here, because it matters more than it might seem. When a resident searches for their city or county in the app store and finds an app that says “City of [their city]” rather than a vendor brand name, the likelihood they will download it, trust it, and use it regularly is meaningfully higher. The platform should feel like a local government service, not a third-party tool.

For agencies managing programs across many districts, white-labeling also means each district can have its own branded presence without requiring separate infrastructure. The state sees one unified program; each district’s residents experience something that feels local and official.

It is also worth asking about accessibility compliance. Federal web accessibility requirements are not optional for government-facing tools, and the agency managing the contract is ultimately responsible for ensuring the platform meets current standards. Make sure any platform you evaluate can provide documentation of its accessibility compliance, not just a claim that it is compliant.

7. How will this reduce manual work for staff — at every level?

A good outreach platform should not just improve the resident experience. It should make life meaningfully easier for the program staff behind it.

At the district level, the most direct gains come from call and email deflection. When residents can get clear, accurate, address-specific answers to common questions through a self-service tool, the volume of repetitive inquiries to district staff drops. At the community level, this has translated to dramatic reductions in call volume — in some cases exceeding 90 percent — simply because information that used to require a staff response is now findable in seconds.

At the state level, the gains are different but equally significant. Instead of chasing down PDF submissions, coordinating manual reporting from dozens of districts, and trying to build a coherent picture of program performance from disparate sources, state staff can access consolidated data from a single dashboard. That is time that can go toward program development and district support rather than data wrangling.

There is also the question of content maintenance. If keeping the platform accurate requires constant vendor involvement, program staff will always be at the mercy of someone else’s timeline. The platform should be designed so that district staff can make routine updates — materials, locations, events, communications — independently and quickly. That is what makes a program sustainable at scale.

8. Can the platform scale while still giving us one clear view of the program?

This is the test that ties everything else together.

A statewide or regional program does not just need a set of local tools that happen to be sourced from the same vendor. It needs a model that can scale — adding districts without creating proportional increases in overhead, maintaining quality and accuracy across a large number of local instances, and surfacing useful information upward without requiring manual aggregation.

The tricky part is that scaling in two directions at once creates inherent tension. The more local flexibility a platform supports, the harder it can be to maintain a coherent state-level view. And the more the state standardizes for the sake of oversight, the harder it is for local programs to reflect their actual, specific conditions.

The right platform resolves that tension by design. State administrators should be able to see aggregate engagement across all participating districts, identify where performance is strong and where it needs attention, push statewide communications or updates when needed, and drill down into any individual district for more detail — without that level of visibility requiring manual effort from anyone at the district level.

Conversely, district administrators should be able to manage their own programs fully — updating content, adjusting materials lists, scheduling communications — without needing state approval for routine changes or vendor involvement for anything that is not a product issue.

Ask your vendor to demonstrate what happens when a new district is added to the program. How long does it take to get them configured and active? How much does it require from the state versus from the district versus from the vendor? The answer tells you a lot about whether the platform was genuinely designed for multi-district programs or whether it was adapted from a single-community tool.

What This Comes Down To

For state and regional agencies, evaluating a waste and recycling outreach platform is not just a communications decision. It is a program design decision that affects how data flows, how local programs are supported, how residents experience their local services, and how the agency demonstrates measurable progress toward broader sustainability goals.

The right platform can help unify messaging without flattening local differences, replace static information with something residents actively engage with, shift routine inquiries away from staff, and create genuine visibility into what is happening across the entire program. Communities using ReCollect have seen contamination rates drop by nearly 30 percent, call volumes reduced by as much as 99 percent, and tens of thousands of dollars saved by moving away from printed materials.

Those outcomes do not happen automatically. They happen because the platform was matched to the right program model, configured with accurate local data, and maintained by staff who could easily incorporate it into their existing workflows.

If your agency is evaluating how to support multiple districts with better recycling education, more consistent outreach, and stronger program visibility — we would like to talk.

Learn more about Routeware ReCollect or contact our team to start the conversation.

Routeware ReCollect is trusted by over 70 million residents across North America.